Most enterprise AI projects follow a familiar pattern: experimenting with AI, launching proofs of concept (POCs), and funding pilot initiatives. Yet, a large number of these initiatives never make it beyond the pilot stage. According to an analysis by RAND Corporation, over 80% of AI projects fail, which is nearly twice the failure rate of non-AI technology projects.

So, what goes wrong?

In this blog, we explore the root causes behind AI project failures and how the AI Decision Tool can help with evaluating the AI strategy.

Here are some of the most common reasons enterprise AI projects fail to move beyond pilots:

Most enterprises struggle to define success. They start AI projects as technical experiments instead of addressing specific business challenges. Projects may then continue without clear goals or focus on areas with little impact on the bottom line.

Without clear, measurable objectives, it is difficult to justify further investment or show AI ROI. And even well-designed AI solutions are abandoned if they do not deliver meaningful outcomes.

For example, an AI chatbot for a financial institution may seem like a great idea, but if customers lack financial literacy, the solution may not deliver significant value.

High-quality data is essential for a successful AI pilot. However, many enterprises struggle with data quality issues. When AI models use raw data in production, they lead to semantic drift and inconsistent results across key metrics such as revenue, customer churn, and conversion rates. This undermines trust in AI outputs and hinders enterprise-wide adoption.

A common misconception is that AI inherently understands business logic. Enterprises expect AI to solve complex problems instantly, but it requires ongoing training, monitoring, and refinement. Unrealistic expectations cause disappointment and abandoned projects.

Scaling AI brings technical and organizational challenges. Teams need to learn new workflows, build the right skills, and change how they work. AI projects can disrupt existing routines, which may cause employees to feel uncertain or skeptical. If teams do not get enough training and support, they might go back to manual processes after the first trial.

AI projects can run into problems like biased or outdated training data, privacy and regulatory issues, and a lack of transparency in how models make decisions. Because of these AI risks, many enterprises are careful about AI implementation widely in situations where the stakes are high, since they worry about legal, compliance, and reputational problems.

Many enterprises believe that AI adoptions fail due to inadequate technology. However, the primary challenge is often in how AI initiatives are pursued. Without clear criteria to evaluate an AI project’s readiness or value, enterprises often invest in initiatives driven by mere hype or isolated experimentation.

As a result, even promising pilots rarely develop into sustainable, high-impact solutions. The underlying problem isn’t the technology but weak decision-making and insufficient data foundations.

This is where a structured evaluation framework becomes critical.

AI Decision Tool offers a systematic framework for evaluating AI use cases and allocating resources. It helps enterprises shift from ad hoc decision-making to a more responsible approach to selecting AI initiatives and achieve long-term digital transformation. The decision tool evaluates AI projects based on:

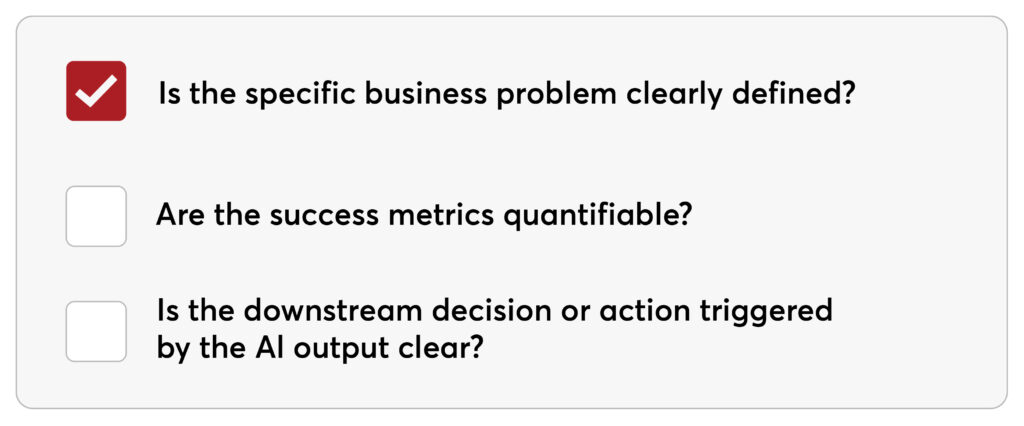

Is the business problem clearly defined? Is success measurable, and is the downstream decision or action triggered by the AI output clear?

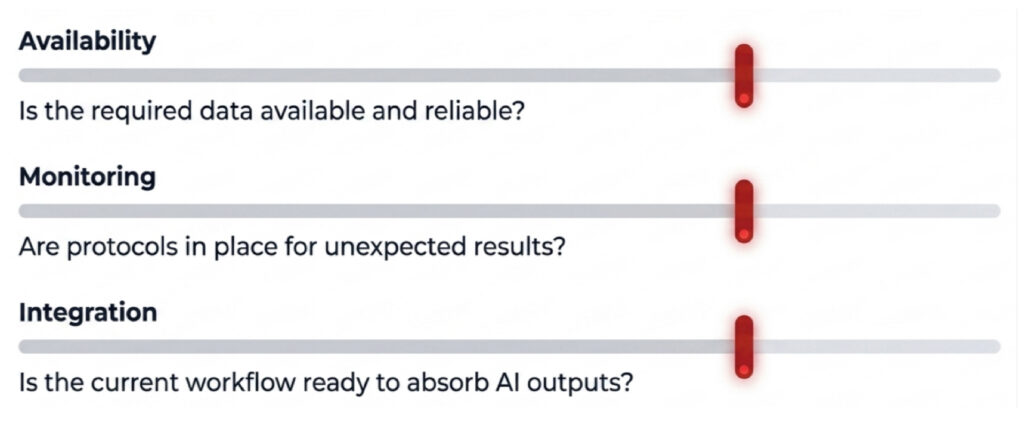

Is the data available and reliable? Is monitoring planned for unexpected results? Is the workflow ready for integration?

Is AI governance covered? Can humans escalate the report? Who owns liability?

To avoid failure, enterprises need to execute AI projects with discipline. By tackling AI execution challenges like data readiness, governance, and team alignment, companies can move from small pilots to AI projects that scale and deliver lasting value.